How to Configure LUN Masking with Openfiler 2.99 and ESXi 4.1

Posted: September 11, 2011 Filed under: Storage, VMware | Tags: esxi, lun masking, openfiler, vmware 13 CommentsNote: If you’d like to see screenshots for this article, check out this other post.

I’ve been building a test environment to play with vSphere 4.1 before we begin our implementation. In order to experiment with the enterprise features of vSphere I needed shared storage between my ESXi hosts. As always, I turned to Openfiler. Now, I’ve deployed Openfiler before, but it was just one ESXi host and a single LUN. It was easy. There were plenty of good walkthroughs on how to set this up in such a way. But using the Google-izer, I couldn’t find a single page that explained how to configure Openfiler for shared storage between multiple hosts. When I finally got it working, I felt accomplished and decided to document the process for future reference. Maybe someone out there will find it useful, too.

Before I begin, let me say that this exercise was purely for learning and is not in a production environment. I’m not trying to optimize anything, performance or otherwise. I’m also not worrying about security. You’ll see I don’t configure CHAP at all.

Mistakes I made the first time

I mentioned I configured Openfiler some time ago for a single ESXi host and a single LUN. I realized when I tried configuring shared storage that the old way didn’t work. I’d like to share what I learned won’t work for shared storage using Openfiler. When I get into the configuration, I’ll go into more details.

Network Access to Entire Subnets

When I configured a single ESXi host to connect to a single LUN presented by Openfiler, I allowed any device on the back-end iSCSI subnet to access the LUN. Since I only had a single ESXi host and the Openfiler box on the back-end subnet, this configuration worked fine. Any subnet scan for iSCSI targets would reveal the only LUN presented. It was a sloppy and unsecure way to operate. I could have been more specific and masked the LUN so that only the ESXi host could see it.

On the System tab in the Openfiler configuration page, you can scroll down and view the Network Access Configuration. The entries here will allow subnets or specific hosts to view LUNs. My first configuration looked like this:

Name Network/Host Netmask Type

iSCSI Subnet 192.168.1.0 255.255.255.0 Share

Although you can present an Openfiler LUN to an entire subnet, for shared storage you must be very specific in your presentations, thus masking your LUNs to specific hosts. The correct way to set up the Network Access Configuration in Openfiler is to use each ESXi host’s IP address and a /32 subnet mask, thereby specifying an individual host and not a subnet.

When I presented the LUN to the entire subnet, the problem showed up when I scanned for iSCSI targets from the first ESXi host. It certainly saw the one Openfiler device, but it also saw all three iSCSI targets I created as three different paths to the same device. The first host connected to all the targets it could see, not leaving any targets for the other two ESXi hosts in my environment.

Dynamic Discovery instead of Static Discovery

With the single ESXi host and single LUN scenario, it was ok to configure Dynamic Discovery in the properties of the built-in VMware iSCSI Initiator. Simply enter the IP address of the Openfiler box and rescan your iSCSI software adapter. The only LUN on the subnet shows up just fine. But configuring Dynamic Discovery, even with the Network Access Configuration above correctly set, did not reveal any iSCSI targets on the Openfiler box.

Instead of configuring Dynamic Discovery, I used Static Discovery and specified the iSCSI target’s IQN in addition to the Openfiler’s iSCSI IP address.

Building an Openfiler VM inside ESXi 4.1

Just to be clear, let me walk you through the steps I took to build my Openfiler VM. I downloaded the 64-bit Openfiler ISO. I chose a remote datastore I already had working to store my Openfiler VM. You can choose local storage or any other storage you have available. I stuck with the default Virtual Machine 7 hardware. My understanding is that Openfiler is built on Debian 5 so I chose Debian GNU/Linux 5 (64-bit) as the guest OS.

I gave the box a single vCPU and 2 GB of RAM. I also chose to separate my VM traffic from my iSCSI traffic. I have two vNetwork Standard switches, one for each network. Kind of like a front-end network and a back-end network. My front-end vSwitch is called Testlab. My back-end vSwitch is called iSCSI traffic. I configured the Openfiler VM with two network adapters. I also left the default network adapters as Intel E1000s. I did not test Openfiler with VMXNET3 network adapters so I don’t know if these virtual adapters will work with Openfiler.

I left the default SCSI controller and gave it one 10 GB virtual hard disk for the OS install. I went back and added a second virtual hard disk of 100 GB to store the data. I tried a configuration with only one 100 GB virtual hard disk, installing the OS on one partition and using the rest for storage, but I could not get Openfiler to use the remaining space for storage. So I just added a second hard disk. Finish the rest of the VM build with all default values.

Before powering on the VM for the first time, right-click the VM and go to Edit Settings… Add a second hard drive of 100 GB using default values for everything else.

Installing Openfiler 2.99

Turn on the Openfiler VM and load the ISO. Reboot into the ISO and Openfiler. Press enter or just wait a few seconds for it to continue from the first page.

Press “Next” to start the installation. Press “Next” after choosing your language. You may get a warning because the installer recognizes an uninitialized hard drive. Realize you’ll be losing data if it’s present on the hard drive and press “Yes” to continue. On the partition page, both hard drives you added are checked by default as installation drives. You’ll want to uncheck the 100 GB hard drive labeled sdb. We’ll configure this later. You may want to check the box next to “Review and modify portioning layout.” Click “Next.”

You’ll get another warning about losing any data present on your hard drives. This is, of course, ok since we know they’re new drives. Click “Yes” to continue. If you checked the box to review the partitioning layout, you can see the dives you added, labeled /dev/sda for the 10 GB hard drive where you’ll install Openfiler and, scrolling down, you’ll see 100 GB of free space where your data will go. Click “Next” to continue.

Choosing the default EXTLINUX boot loader worked fine for me. Go ahead and click “Next.” On the networking page, go ahead and check the box next to each network interface, eth0 and eth1. Highlight each of them in turn and click “Edit” to configure your IP settings. My first network interface, eth0, is connected to my management and VM traffic network – my Testlab vSwitch. So I’ll configure it to have an IP address in that subnet. I disabled IPv6 support because I know I’m not using it. You can leave it enabled if you want. It won’t hurt anything.

Configure your second network interface, eth1, for an IP address in your iSCSI traffic subnet. I separated my iSCSI and VM traffic with vNetwork Standard switches so each interface will have a different IP address. For a test lab environment, it’s ok to run your iSCSI traffic on the same subnet as your management or VM traffic. Just don’t expect great performance.

My back-end network is not routed to my front-end network. My ESXi hosts and my Openfiler each have management interfaces residing on the front-end network and iSCSI traffic interfaces residing on the back-end network. I have a test lab Active Directory domain set up on the front end network. When you set up a hostname for your Openfiler box, unless configured later on within the web interface to work on an Active Directory domain, you’ll have to manually add DNS records to resolve the hostname entered here. Add in default gateway and DNS information here. I’m not working on a routed network at all so I have no gateway information. Click “Next” when finished.

I was warned about not having a default gateway but since I know what I’m doing, I can safely ignore this warning. Feel free to configure a time zone and click “Next” when done. And finally, enter a root password. The root password can be used at the Openfiler console shell. Everything else will use the administrative web interface which uses a different username and password. Press “Next” when done. If you’re happy with your choices so far, click “Next” to actually install Openfiler. You’ll see Openfiler formatting your configured partitions as requested. Your 100 GB data partition will be formatted by ESXi for VMFS3 later on when you add storage to your hosts. Once the installation is finished, click “Reboot” and we’ll start configuring Openfiler.

Configuring a Single LUN with Multiple iSCSI Targets

You’ll see the bootloader screen each time Openfiler boots. Either press enter to continue or wait the allotted time and Openfiler will start automatically.

Once you see the screen prompting for your Openfiler login and showing you the web administration GUI URL, you can close the console to the Openfiler VM. You’ll mainly work from Openfiler’s web interface, not the console command line. Take note of the web administration address. It’s HTTPS and you have to specify port 446. The IP address of the web interface is the IP address of the first network interface, eth0. You can’t manage Openfiler from the second network interface, eth1.

From a computer connected to your front-end network, type in the address to the Openfiler web interface. In this case, I typed in https://10.240.1.105:446. Your web browser may holler about an untrusted certificate. Just ignore it and continue on. Once at the Openfiler web login screen, enter the default username and password of “openfiler” and “password” without the quotes.

Once logged in, let’s start configuring! On the top menu, go to “System.”

Scroll down and enter your ESXi host information into the Network Access Configuration. The purpose of this blog post is to show you how to configure shared storage using Openfiler so you should have at least two ESXi hosts. In my test lab, I have three, so I have three items to configure on this page. You can put anything you like in the “Name” column, but I put something descriptive that lets me know for which device I’m configuring access. Be careful here. As I mentioned at the beginning of my post in the Mistakes I Made section, for shared storage, you’ll want to specify an exact host for which you’ll want to allow access. This means specifying the IP addresses of the network adapters on each ESXi host you’ll be using for iSCSI traffic under the “Network/Host” column. For each ESXi host, under the “Netmask” column, be sure to use 255.255.255.255. You can only add or delete one item at a time, pressing update after each change.

When you have your ESXi hosts configured, click “Volumes” on the top menu, then “Block Devices” on the menu on the right, then click on your second hard drive, /dev/sdb.

Scroll down to create a partition. The only change you need to make is to make the “Partition Type” Physical Volume instead of the default Extended Partition. Click “Create” after making this change.

Click “Volume Groups” in the right hand menu and fill in the required information. A volume group name of volume1 should be fine. Check the box next to your /dev/sdb1 and click “Add Volume Group.” After creating your partition, you can see it created above. Click “Add Volume.”

Scroll down and fill in the information for creating a volume. Give it any Volume Name you like. I chose the very original “filer1” with a Volume Description of “data.” Be sure to move the Required Space slider all the way to the right and fill up your entire partition. If you leave the default setting of 32 MB (which is ridiculously small), you’ll run into problems later. Also, be sure to change the Filesystem/Volume Type to block(iSCSI,FC,etc). Click “Create” when finished.

In the overhead menu, click on “Services.” Notice that the iSCSI Targer service is set to “Stopped” by default. You won’t be able to add iSCSI Targets without starting this service. Go ahead and click on “Start” to start the service. You’ll also want to click “Enable” for the iSCSI service. If you don’t enable the service, it will not stay enabled across reboots and you’ll lose any iSCSI connections you have to the Openfiler box. You’ll see that the iSCSI Target service is now running.

Click on “Volumes” in the top menu then click on iSCSI Targets in the right hand menu. This should bring you to the screen below where you can start adding iSCSI targets. Under the heading, “Add new iSCSI Target,” click the “Add” button as many times as you have ESXi hosts. In my case, I have three ESXi hosts, so I clicked “Add” three times to create three iSCSI targets. If you have two ESXi hosts, you’ll create two iSCSI targets. Each ESXi host will connect to a single iSCSI target. We’ll make sure that each iSCSI target you create gets mapped to the same LUN.

Once you have your iSCSI targets created, you’ll need to configure each one. You can see all your created iSCSI targets if you expand the drop down box. I created three targets so I see three targets. Each target will make use of the circled menus: Target Configuration, LUN Mapping, and Network ACL. For consistency, choose the top-most iSCSI target from the drop down list. In this case, I’ll choose the target whose last two digits are “9b.” Click the “Change” button next to the target to switch to that target’s settings. Click the LUN Mapping item.

The LUN Mapping screen is where you map iSCSI Targets to iSCSI LUNs. We’ve created a single LUN, so the only option we have is to map our first iSCSI target to our only iSCSI LUN. Click the “Map” button on this screen.

Next, click the “Network ACL” menu. Notice the iSCSI target name, the long string starting with “iqn.” IQN stands for iSCSI Qualified Name. It’s just a unique way of addressing an iSCSI device, similar in purpose to a MAC address. You’ll also see each item you listed under the Network Access Configuration page. By default, each ESXi host listed is denied access to all iSCSI targets. You’ll have to explicitly tell Openfiler which ESXi host you’ll want to have access to which iSCSI target. For simplicity, we’ll configure the first ESXi host to connect to the first iSCSI target, the second ESXi host to connect to the second iSCSI target, and third ESXi host to connect to the third iSCSI target.

The Network ACL screen is where you’ll actually mask ESXi hosts from iSCSI targets. Since we’re working on the first iSCSI target, we’ll configure the first ESXi host, esx01, to “Allow” in the Access column. We’ll leave the other hosts set to deny. When we click the “Update” button, we’ve now masked the first iSCSI target so that only the first ESXi host can see it. The other two hosts cannot.

Follow this process for the other two iSCSI targets. Click on “Target Configuration” from the top menu. Use the drop down list to highlight the second iSCSI target. Click “Change.” Click on “LUN Mapping” from the top menu. Simply click, “Map,” and click on “Network ACL” from the top menu. For the second host, esx02 in this case, choose “Allow” from the drop down menu in the Access column. Leave the other two ESXi hosts set to deny.

Finally, configure the last iSCSI target. Click on “Target Configuration” from the top menu. Use the drop down list to highlight the third iSCSI target. Click “Change.” Click on “LUN Mapping” from the top menu. Click, “Map,” and click on “Network ACL” from the top menu. For the third host, esx03, choose “Allow” from the drop down menu in the Access column. Leave the other two ESXi hosts set to deny.

Although you’re done for the moment, don’t close your web browser. You’ll need to access the IQNs of the iSCSI targets you created. You are done configuring Openfiler for shared storage. The final step is to configure each ESXi host in your environment to “see” their respective iSCSI targets and thus the shared storage to which each iSCSI target is mapped.

Configuring ESXi 4.1 Software iSCSI Storage Adapters

Using your vSphere client, connect to your vCenter Server. In the screenshot below, I’m viewing the first ESXi host, esx01, and I’m on the Configuration tab viewing the Storage Adapters. I’ve highlighted the built-in VMware iSCSI Software Adapter. By highlighting the iSCSI adapter, I can now modify its properties. In the box below the adapters list, click on “Properties.”

Clicking “Properties” brings up the iSCSI Initiator Properties dialogue box. If you aren’t already using the iSCSI software initiator, you’ll first have to enable it. Do this by clicking, “Configure.” In the General Properties dialogue box that appears, check the box next to “Enabled,” and click “OK” to close the General Properties dialogue box.

Since you still have your web browser open to Openfiler, go back to Volumes, iSCSI Targets. Choose the first iSCSI target from the drop down list and click change. Click on Network ACL from the top menu. You’ll see the IQN of the iSCSI target at the top of the page. You need to copy this IQN, everything in between the quotes, into the iSCSI Initiator Properties of your first ESXi host. You can both write down the IQN number and type it into the iSCSI Initiator Properties or you can simply copy and paste. Obviously, you’ll reduce the chances of mis-typing if you copy and paste.

Back in the vSphere client and the iSCSI Initiator Properties dialogue box, click on the Static Discovery tab and click the “Add” button at the bottom. The Add Static Target Server dialogue box appears. Enter the IP address of the Openfiler box. Remember, Openfiler has two network cards; one for the management network and one for iSCSI traffic. In this exercise, the IP address of my Openfiler management NIC is 10.240.1.205 and the IP address of my Openfiler iSCSI NIC is 172.16.1.205. Enter Openfiler’s iSCSI NIC address in the iSCSI Server edit box and the IQN of the first iSCSI target. The port number should be left at its default 3260 value.

Click “OK” when finished and after a few seconds, your configuration should appear in the Static Discovery tab under “Discovered or manually entered iSCSI targets:.” Finally, click “Close” at the bottom of this screen. A message box will appear. Read it and click “Yes.”

If everything’s working as it should, after a few seconds you should see Openfiler populate as a device.

You can also manually rescan by right-clicking the iSCSI Software Adapter and choosing “Rescan” or by clicking “Rescan All…” in the upper right hand corner. The “Rescan All…” option takes a bit longer than right clicking the iSCSI adapter because it rescans not one, but all of your adapters for new devices.

Troubleshooting

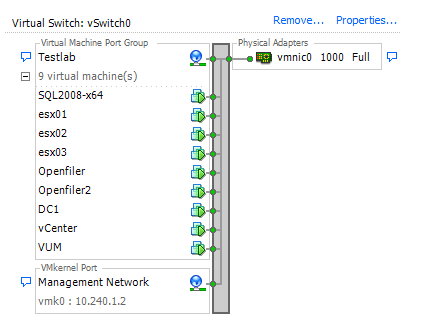

If at this point you don’t see Openfiler populate under devices, you can try to troubleshoot the problem. The first thing to check is to make sure the ESXi host and Openfiler can see each other on the iSCSI network. By that, I mean ensure you have connectivity between the two boxes. In my case, my ESXi boxes are virtualized inside and ESXi box and there are settings that must be configured special for this case. In particular, on the ESXi host on which there are virtualized ESXi hosts, each vSwitch to which the ESXi hosts connect must be set to Promiscuous mode. Also, the virtual network adapters of the virtualized ESXi host must be E1000, not VMXNET3 or anything else. To check connectivity, put a VM on the same iSCSI vSwitch the ESXi hosts and Openfiler are on. Ping both the ESXi host and Openfiler. You should be able to ping them both. For reference, my top level virtual networking looks like this:

My ESXi hosts and each Openfiler VM show up on both switches because they have two virtual NICs each, one connecting to the Testlab switch and therefore the management network, and one connected to the “back-end” iSCSI network. To test connectivity, simply move a VM, say the one labeled VUM, to the iSCSI vSwitch, give it an IP address in that subnet, and try pinging the ESXi host and Openfiler.

If you have connectivity between each ESXi host and Openfiler, then go back and check your Openfiler settings. Make sure you have created however many iSCSI Targets as there are ESXi hosts in your environment that wish to connect to shared storage (on the iSCSI Targets page), each iSCSI target is mapped to a LUN (on the LUN Mapping page), and only one ESXi host is allowed access to each iSCSI target (on Network ACL page).

Also, if you typed in the IQN of an iSCSI target into the ESXi Static Discovery dialogue box, make sure you didn’t mistype it. Your best bet to avoid mistyping is to copy and paste the IQN from the Openfiler web interface directly into the ESXi Static Discovery dialogue box. I mistyped my first IQN and ran into this problem. From now on, I try to copy and paste.

One of the first problems I had was that my first ESXi host was seeing all three iSCSI targets I created in Openfiler. It would see the LUN as a device, but by changing the Storage Adapters view from Devices to Paths, I saw that this one ESXi host was seeing all three targets as different paths to same LUN. This is technically correct, but the problem was that the first ESXi host connected to all three targets, leaving no targets to which my other two ESXi hosts could connect. This problem occurred because I had presented all three iSCSI targets to the entire subnet. In Network Access Configuration within Openfiler, I had input an entire subnet, 172.16.1.0 and 255.255.255.0, instead of creating three separate hosts to which I could mask my LUN. When the first ESXi host scanned its iSCSI adapter, it saw all three iSCSI targets and connected to them. Thus born was the need for LUN masking.

Adding Shared Storage to ESXi Hosts

Adding the shared storage at this point is the same as adding non-shared storage. On the configuration tab, click on Storage in the left hand menu and then in the upper right hand corner, click on Add Storage…

The Add Storage wizard appears. Leave the default selection of Disk/LUN and click Next.

You should see the same iSCSI device you saw in the Storage Adapters view. Highlight it and click Next.

Verify the layout and click Next.

Give your new datastore a name, something original like, openfiler, or datastore. Click Next.

Review the Add Storage summary and click Finish.

ESXi will create the datastore and rescan VMFS. Once it’s complete, you should have Normal status next to the datastore identification.

And congratulations! Hopefully, you have successfully masked a LUN to multiple ESXi hosts. You can now do all that is true and good that can be done with shared storage and ESXi and vSphere 4.1 like vMotion, HA, and DRS.

Below is the idea behind what this blog post is all about. We have three ESXi hosts, each, of course, with its own built-in VMware iSCSI software initiator, iSCSI targets created within Openfiler version 2.99, and remote storage of 200 GB total. I configured the first Openfiler prior to taking the screenshots for this post. The screenshots came from adding the second Openfiler VM and configuring it. The end result is that I have three ESXi hosts all sharing two LUNs.

This is a fantastic set of instructions! I have now got a virtual Openfiler providing iscsi storage to my virtual ESXi4.1 servers for my virtual virtual machines. I am certainly going to pass on this link to my students! Thanks Mike!

Thanks for your patronage, Mark. I was pretty excited to get this up and running, too. It’s very useful for learning. If you have any questions or suggestions, please let me know. All the best. – Mike

[…] https://virtuallymikebrown.com/2011/09/11/how-to-configure-lun-masking-with-openfiler-2-99-and-esxi-4… […]

Hi,

I have been having a little bit of trouble with some things on your instructions, they are great and I can create an iSCSI target and get the ESXi hosts to point to them, but only on the management network where I am using 10.10.255.x. /16 When I try it on the storage network using a 192.168.1.x /24 I can’t connect to it. It feels kind of like a connection issue but we can ping the ESXi host from the open filer box using both management and storage IP addresses.

Do you have any ideas as to why this might be happening? Is there any chance that you could add some screen shots for this so I can see how mine is different?

P.S. I am one of marks students

Hi Scott. I’ve torn down that portion of the lab but I’d be happy to rebuild it and get screenshots for you. Screenshots were included in my original draft post, but I’m working in Afghanistan right now and my upload speeds are hideous – too slow to upload much in the way of screenshots – I was happy to just get the text uploaded! I’ll rebuild the lab and post screenshots – one terribly slow upload after another 🙂

Until then, ensure your Openfiler Network Access Configuration is correct. You should have one entry for each ESXi host you’re using for shared storage. In the Network/Host column, you should have the IP address of each ESXi host’s iSCSI network NIC – not the management IP. In the Netmask column, you should have 255.255.255.255 to tell Openfiler that you’re designating a single IP address for access to storage.

Also, in the Network ACL portion of your Target Configuration, ensure you have set Access to “Allow” in the drop down box for each ESXi host you want to access each target. Each host should only have access to one target (the rest shoud be set to “Deny”) – but you can have multiple targets for each LUN – thus sharing the LUN.

Thanks for helping us out Mike – but please don’t go to too much trouble. Would it be easier if Scott posted the pics then you could comment on them rather than pushing them up at your end? Cheers! Mark

Hi Mark. Feel free to have Scott post his pics, but I’ll go ahead and post what I have, as well. Turns out I still have my original draft with screenshots so I don’t have to rebuild the lab. It’s my pleasure, either way…

That’s interesting about the jumbo fmreas vmkernel support in ESXi. I had missed that tidbit and was mostly going by iSCSI configuration guide, however looking deeper, it never specifically says ESXi, just ESX.I’ve implemented it in production with jumbo fmreas in several sites now and haven’t had any problems, but now I’ll probably be a little more hesitant since it is explicity not supported on ESXi.

I got it working 🙂

The problem was partly network connection here at the institute. I changed my storage address and changed the subnet mask as you suggested. the other thing I did was on the ESXi server I added a VMkernal port to a vswitch this gave it an IP address and it seems to be working.

thank you so much for your instructions and time you have put into supporting us, I have really appreciated it.

Hi Scott. Re-reading my post, I see I left out the details of the virtualized ESXi host networking. As you found out, each virtual ESXi host must have two VMkernel port groups – one for the management network and one for the iSCSI network. If an ESXi box, whether virtual or physical, has only one NIC, you can add both VMkernel port groups to the same vSwitch, giving them different IP addresses and separating them with VLANs. Of course, this means you’d be sending both management traffic and iSCSI traffic over the same link. In a test lab, that’s fine. But if it can be avoided, why not? This would be closer to what you’d find in a production environment anyways. With the virtualized ESXi boxes, you can add two virtual adapters and configure one on each of two vSwitches. Then you can separate your management and iSCSI VMkernel port groups – one on each vSwitch – to avoid sending both types of traffic over the same link and maximizing (virtual) throughput. You wouldn’t even need VLANs with this configuration. Of course, for learning and building a test lab, that’s fine. A production network would benefit from a better design. Thanks for reading!

[…] going to start this one from scratch, all the way with Openfiler. Also, we’ll be using this helpful guide from Mike Brown, so you can sing along if you want to! So the first thing I did was create a new […]

Hey Dude, thanks so much. 2.99 was giving me a hard time. cheers.

[…] این مقاله توضیح داده شده: How to Configure LUN Masking with Openfiler 2.99 and ESXi 4.1 پاسخ با نقل […]