Useful commands for Cisco Nexus zoning

Posted: February 16, 2015 Filed under: Cisco Nexus, Networking, Storage | Tags: add zones, alias, cisco nexus, device, device-alias, fibre channel, how to add zones to cisco nexus, native, nexus 5k, nexus fc, nexus native fc, zone, zones, zoneset Leave a commentAfter implementing Cisco Nexus 5ks that include native Fibre Channel switching for shops that usually don’t have dedicated SAN guys, I’m often called up sometime later to offer a refresher on how to add zones. I usually share this tidbit via email, but here it is for the internets. These commands are very similar on newer MDS models, as well.

Useful commands for Cisco Nexus zoning

Integrating Cisco MDS 9124 switches with Nexus 5500s

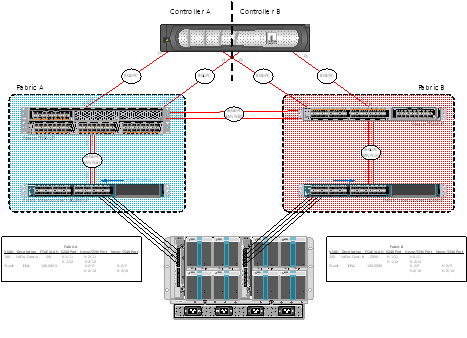

Posted: October 28, 2013 Filed under: Cisco Nexus, Networking | Tags: 5500, 5548, 5596, 55xx, 9124, Cisco, cisco mds, fc, fibre channel, mds, Nexus Leave a commentI wanted to take a short minute and document the addition of a few Cisco MDS 9124s to our test lab at work. The purpose of the addition in the test lab is just to show the functioning and capabilities of the devices to work together. See my previous post on configuring native FC over a Nexus 5548 and 5596. The FC-specific portions of the MDS config are very similar to the Nexus line. Here’s what the middle state looks like. I haven’t taken the time to move the NetApp FC links to the MDS switches yet (or the UCS FC links instead), but the port provisioning process will be similar to those already documented in this post and the Nexus post. The Visio of what’s configured is below followed by my MDS configuration notes.

Cisco Nexus Fibre Channel configuration template

Posted: May 23, 2013 Filed under: Cisco Nexus, Networking, Storage | Tags: 5548up, 5596up, cisco nexus, cisco ucs, device-alias, eisl, e_port, fc, fc config, fc configuration, fc end host mode, fc switch mode, fc ucs, fibre channel, fibre channel configuration template, fibre channel template, f_port, isl, n_port, te_port, tf_port, ucs, vsan, zone, zoneset, zoning 6 CommentsI recently had the opportunity to configure native fibre channel in my test lab at work using Nexus 55xx series switches and Cisco’s UCS. What I’ll do in this post is to share my templatized fibre channel configuration in a somewhat ordered way, at least from the Nexus point of view. This test lab was configured with the attitude that it should show off the capabilities of the hardware and software. Concepts included in this initial configuration include NPIV, NPV, SAN port-channels, F_Port trunking, VSANs, device aliases, and of course, standard FC concepts like zones and zonesets.

Let me first share the end-state as of today, what I’ll call Phase I. I’ll explain what my initial plan was for Phase I and, after learning a bit more, what I plan to do for Phase II. Please feel free to correct me in the comments below – I made a lot of mistakes configuring this and I wouldn’t be surprised if there’re a few more in there.

How to configure SPAN on a Nexus 55xx

Posted: April 13, 2013 Filed under: Cisco Nexus, Networking | Tags: 5k span, cisco nexus, configure span, how to configure span, Nexus, nexus span, span, switch port analzyer 4 CommentsI’ve recently needed to configure SPAN a couple times in the lab at work to troubleshoot some issues – or at least to see what I could see. It wasn’t exactly glamorous work, but somebody had to do it. Now, I had to look it up the first time because it had probably been a good year since I’d done it. The document I used is here. Well, the second time I needed to configure SPAN was shortly after the first. I was annoyed that I had to look at the same document and skip over all the paragraphs to get to the commands, then sort out the FC ports and other commands I didn’t need. So for my benefit, and perhaps yours, here’s my short and sweet version of how to configure SPAN on a Nexus 5k.

issues – or at least to see what I could see. It wasn’t exactly glamorous work, but somebody had to do it. Now, I had to look it up the first time because it had probably been a good year since I’d done it. The document I used is here. Well, the second time I needed to configure SPAN was shortly after the first. I was annoyed that I had to look at the same document and skip over all the paragraphs to get to the commands, then sort out the FC ports and other commands I didn’t need. So for my benefit, and perhaps yours, here’s my short and sweet version of how to configure SPAN on a Nexus 5k.

My OTV Take

Posted: April 1, 2013 Filed under: Cisco Nexus, Networking | Tags: cisco otv, data center interconnect, datacenter interconnect, dci, l2 dci, layer 2 dci, otv 3 CommentsAfter my recent DFW VMUG presentation where I spoke on the topic, a friend emailed me and asked what I thought about OTV.

“You mentioned that you were against OTV. Curious on your take on this, as we are using it across two datacenters using N7K, UCS, NetApp and VMware.”

I’d like to share my response to him here.

Please don’t get me wrong. If one is forced to implement a Layer 2 Data Center Interconnect (DCI), OTV is probably the best solution. Sometimes, L2 connectivity between data centers is a functional requirement – perhaps even a constraint. In these cases, one should look at the benefits and risks of implementing an L2 DCI and then make an informed decision on whether they should continue with such a deployment. Should they choose to deploy OTV, someone needs to accept the risks associated with OTV in its current implementation.

Typical ESXi host-facing switchport configs

Posted: November 8, 2012 Filed under: Cisco Nexus, Networking, VMware | Tags: bpdu, bpduguard, config, esxi, esxi switch config, switch configuration, switch port, switchport, switchport nonegotiate, switchport trunk allowed vlan 6 CommentsI was troubleshooting a production issue a couple days ago that led me to request the switchport configs from our Networking team of our ESXi 5.0 hosts that pass virtual machine traffic. Here’s a snippet of what they came back with for two particular ports:

interface GigabitEthernet1/5

description -=R910 ESX# 1 – Front Side=-

switchport mode trunk

end

interface GigabitEthernet1/6

description -=R910 ESX# 1 – Front Side=-

end

Well. Not only do I see our problem (no config *at all* on one port!), but I see something else that troubles me. Our ESXi host-facing ports are only configured as trunk ports. Absolutely* nothing* else. Well, this just won’t do.

Yet another way to create peer keepalive link between Nexus 5ks

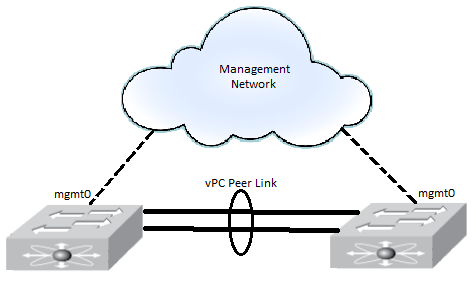

Posted: February 8, 2012 Filed under: Cisco Nexus | Tags: keep alive, keep alive link, keepalive, management 0, mgmt0, Nexus, peer keep alive, peer keepalive, peer keepalive link, peer link, peerlink, vPC, vrf management 4 CommentsDuring two previous implementations, I’ve configured the peer keepalive between two Nexus 5020s as most folks have seen it done: each mgmt0 interface connected to a management network, passing both day-to-day management traffic and peer keepalive traffic. Something like this:

Replacing a Cisco Nexus 2224 Fabric Extender

Posted: January 14, 2012 Filed under: Cisco Nexus, Networking | Tags: 2224, cisco nexus, cisco nexus fabric extender, Fabric Extender, FEX, n2k, replace 2000, replace 2224 fex, replace cisco nexus fabric extender, replace fex, replace nexus, replace nexus 2000, sh few, show few 2 CommentsSo my team and I got a call to swing by a customer’s site on our way to another job. They told us half the ports went bad on a FEX and we were to install the replacement that just arrived onsite. In this post, I’ll explain how to replace the FEX (which is trivial) and more importantly how to verify that it’s working after installation.

Upgrading NX-OS on the Nexus 5020

Posted: November 24, 2011 Filed under: Cisco Nexus, Networking | Tags: nexus upgrade, nx-os, nx-os upgrade, nxos, upgrade, upgrade nx-os 1 CommentDuring another virtualization implementation at a customer’s site, I had the opportunity to upgrade Nexus 5020 switches. We upgraded from 5.0(2)N2(1) to 5.0(3)N2(1). The process was surprisingly simple. The steps include

1. Setting up an TFTP server

2. Uploading both the NX-OS binary and the kickstart binary

3. Installing the binaries

Configuring Cisco Nexus 5020 and 2224 Fabric Extenders for Virtual Port Channeling (vPC)

Posted: September 26, 2011 Filed under: Cisco Nexus, Networking | Tags: 2000 series, 2224, 5000 series, 5020, Cisco, Nexus, port channeling, virtual, virtual port channeling, vPC Leave a commentSo it’s been a long time since I’ve posted. We’ve finally finished our data center site surveys and we’re very close to starting the implementation phase. In preparation for implementation, we’ve begun testing configurations, playing with possibilities, and generally, seeing what the given hardware can do. We don’t exactly know what the architecture design team will give us to implement, but our pre-work will let us get a feel for the kinds of things we’ll be doing. For instance, we know we’ll be using virtual port channels and fabric extenders, so we’ve configured these on several of our Nexus switches. We’ll probably blow away the configs when we officially start anyways, but again, this gives us a chance to get our hands on the equipment and practice some of the same configs we’ll be using later.

The Design

Our test design will have two 5020s with 40 10GbE SFP ports plus a 6 port 10GbE SFP daughter card and two 2224 fabric extenders, FEXs, with 24 1GbEcopper ports. It will not be a cross connected FEX design because we will use servers with dual-homed 10GbE converged network adapters, CNAs, which we plan on cross connecting. Cisco does not support a design where the FEXs and the servers are both cross connected. Our test design looks the diagram below. Note that the diagram shows the server connected to each FEX via copper ports. We’ll actually be connecting each server via CNA twinax cables to the 10 Gb ports on the 5020s.