Yet another way to create peer keepalive link between Nexus 5ks

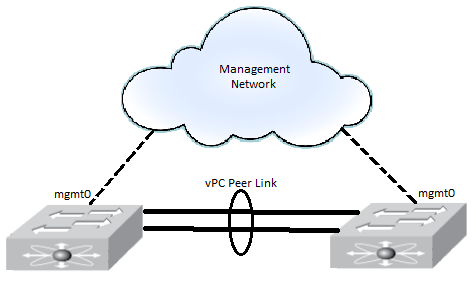

Posted: February 8, 2012 Filed under: Cisco Nexus | Tags: keep alive, keep alive link, keepalive, management 0, mgmt0, Nexus, peer keep alive, peer keepalive, peer keepalive link, peer link, peerlink, vPC, vrf management 4 CommentsDuring two previous implementations, I’ve configured the peer keepalive between two Nexus 5020s as most folks have seen it done: each mgmt0 interface connected to a management network, passing both day-to-day management traffic and peer keepalive traffic. Something like this:

And recently I read in a blog post (forgive me but I couldn’t find it again to post the link here) that, one may be reluctant to put the vPC peer keepalive, an arguably important service, on the regular management network. Should this be the case, and that same person happen to have spare 10 Gb interfaces to spare, a two link port channel could be created between the 5020s, separate from the vPC Peer Link, and the keepalive traffic be sent over these links. This configuration looks like this:

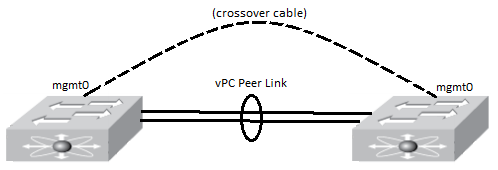

The other day, while integrating a second pair of 5020s at a customer site, I saw they had configured the keepalive link a bit differently in a way that let them manage their 5020s like they manage the rest of their networking devices – with switch virtual interfaces. Instead of using the mgmt0 interface for management traffic at all, they connected a crossover cable between each 5020 mgmt0 interface over which they sent only peer keepalive traffic. Then they configured an SVI in their management VLAN which they used to manage the switch remotely. It looked like this, then:

A few things they had to change in their configuration were to create default routes in each vrf, management and the default. For instance, if their management VLAN is 100, with an SVI of 192.168.100.1 on their layer 3 core switch, then their config would look like this:

Nexus(config)# vrf context management

Nexus(config-vrf)# ip route 0.0.0.0/0 192.168.100.1

And then a default route in the default vrf:

Nexus# conf t

Nexus(config)# ip route 0.0.0.0/0 192.168.100.1

Oh the horror! Well, I mean, no, I stand by it, oh the horror! 🙂

crap!!!!!!!!!

I need to implement the exact same thing but I have a concern. I also want to connect the both mgmt ports via crossover cable and run the keep-alive through that. If I configure a default route to the SVI then all my other default traffic will go to the SVI. How will the config look like with the routes?

The Nexus comes with two default VRFs: one is named “management” and one is named “default.” The mgmt0 interface is in the management VRF by default. All other ports are in the default VRF by default. In your case, when configuring the mgmt0 interface when you’re using a crossover cable, you can also add the following config:

vrf context management

ip route 0.0.0.0/0 [partner-5k-mgmt0-interface]

Then create a default route for all other traffic by simply adding a default route statement:

ip route 0.0.0.0/0 [typical-default-gw]

If you’re using the 5ks as L2 switches only, then you don’t even need a default route in the default VRF. The mgmt0 interface is a L3 interface by default so you’ll want to add the ip route statement to the management VRF.