SRM error – failed to recover datastore. An error occurred during host configuration

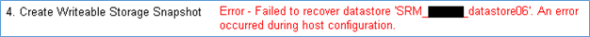

Posted: October 30, 2013 Filed under: SRM, VMware | Tags: An error occurred during host configuration, failed to recover datastore, site recovery manager, srm Leave a commentI’d like to share an error I was receiving when running test recoveries with SRM 5.1.1 on a NetApp, ONTAP version 8.1.2. The datastores in question were NFS. The error received in the SRM report is consistently in Section 4. Create Writable Storage Snapshot, but strangely, on differing datastores, first datastore 6, then on datastore 5, then back to datastore 6. This is without making changes to the environment in between tests. Weird, huh? The exact error in the report is

“Error – Failed to recover datastore ‘<datastore6>. An error occurred during host configuration.”

I didn’t find anything significant in the SRM logs at the Protected Site, but something did show up in the Recovery Site SRM logs. You can find the SRM logs on the SRM server in \ProgramData\VMware\VMware vCenter Site Recovery Manager\Logs.

Searching for the string “error” in the latest log for around the last time a test was run, I found this tidbit.

2013-10-29T22:41:38.504-05:00 [07316 error 'StorageProvider' opID=B1ECBE6F-00006511:fb79] Failed to mount NFS volume '<redacted_ip_address>:/vol/testfailoverClone_nss_v10745371_SRM_<redacted>_datastore06' on host 'host-43': (vim.fault.PlatformConfigFault) {

--> dynamicType = <unset>,

--> faultCause = (vmodl.MethodFault) null,

--> faultMessage = (vmodl.LocalizableMessage) [

--> (vmodl.LocalizableMessage) {

--> dynamicType = <unset>,

--> key = "vob.vmfs.nfs.mount.error.limit.exceeded",

--> arg = (vmodl.KeyAnyValue) [

--> (vmodl.KeyAnyValue) {

--> dynamicType = <unset>,

--> key = "1",

--> value = "<redacted_ip_address>",

--> },

--> (vmodl.KeyAnyValue) {

--> dynamicType = <unset>,

--> key = "2",

--> value = "/vol/testfailoverClone_nss_v10745371_SRM_<redacted>_datastore06",

--> }

--> ],

--> message = "NFS mount <redacted_ip_address>:/vol/testfailoverClone_nss_v10745371_SRM_<redacted>_datastore06 failed: NFS has reached the maximum number of supported volumes.",

--> }

--> ],

--> text = "",

--> msg = "An error occurred during host configuration."

--> }

Did you catch that? I hope so – I highlighted the lines for you. The maximum NFS mounts per host were exceeded so further attempts to mount NFS datastores failed. I figure that it’s just chance that one datastore or another is mounted before another, to an extent, as the recovery process moves down the datastore list.

Changing the maximum allowable NFS mounts from the default of 8 to something that won’t likely be exceeded in your environment, like 64, worked a charm! Later test failovers were successful. You can find this advanced setting by selecting a host, clicking on the Configuration tab, then under Software, clicking on Advanced Settings. Choose NFS and scroll down, looking for NFS.MaxVolumes. For vSphere 5.0 and later, the maximum NFS datastore mounts went up to 256 from the 4.1 days of 64.

Hope this helps somebody, because it took me a while to find it.