New to NetApp? Here are the default Snapshot settings explained for ONTAP 8.1

Posted: June 18, 2013 Filed under: NetApp, Storage | Tags: default snap settings, default snapshot settings, default snapshots, snap settings, snapshot settings 2 CommentsSnapshots are enabled by default when a volume is created. They follow the schedule as seen from the CLI command snap sched: 0 2 6@8,12,16,20

and from the System Manager GUI. Note the default Snapshot Reserve is 5% and that the checkbox for Enable scheduled Snapshots is checked by default.

Cisco Nexus Fibre Channel configuration template

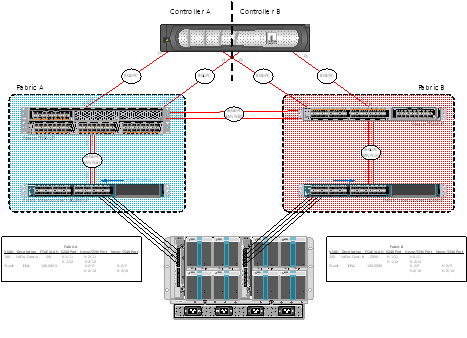

Posted: May 23, 2013 Filed under: Cisco Nexus, Networking, Storage | Tags: 5548up, 5596up, cisco nexus, cisco ucs, device-alias, eisl, e_port, fc, fc config, fc configuration, fc end host mode, fc switch mode, fc ucs, fibre channel, fibre channel configuration template, fibre channel template, f_port, isl, n_port, te_port, tf_port, ucs, vsan, zone, zoneset, zoning 6 CommentsI recently had the opportunity to configure native fibre channel in my test lab at work using Nexus 55xx series switches and Cisco’s UCS. What I’ll do in this post is to share my templatized fibre channel configuration in a somewhat ordered way, at least from the Nexus point of view. This test lab was configured with the attitude that it should show off the capabilities of the hardware and software. Concepts included in this initial configuration include NPIV, NPV, SAN port-channels, F_Port trunking, VSANs, device aliases, and of course, standard FC concepts like zones and zonesets.

Let me first share the end-state as of today, what I’ll call Phase I. I’ll explain what my initial plan was for Phase I and, after learning a bit more, what I plan to do for Phase II. Please feel free to correct me in the comments below – I made a lot of mistakes configuring this and I wouldn’t be surprised if there’re a few more in there.

Of showers and sysstat

Posted: February 20, 2013 Filed under: NetApp, Storage | Tags: flash cache, netapp cpu %, netapp cpu utilization, netapp performance, performance, performance analysis, sysstat Leave a commentI was asked by a client yesterday in passing how to check CPU utilization on one of their NetApp filers. I didn’t immediately know where to go and we quickly moved on to something else. So as I was showering this morning, as I do many mornings, I remembered that, of course, you can use sysstat to view performance data. Anyways, this is a real nice way to view instantaneous general performance data. Options for this command are shown below.

deathstar> sysstat ?

usage: sysstat [-c count] [-s] [-u | -x | -m | -f | -i | -b] [interval]

-c count – the number of iterations to execute

-s – print out summary statistics when done

-u – print out utilization format instead

-x – print out all fields (overrides -u)

-m – print out multiprocessor statistics

-f – print out FCP target statistics

-i – print out iSCSI target statistics

-b – print out SAN statistics

interval – the interval between iterations in seconds, default is 15 seconds

What’s the (SnapMirror) syntax, Kenneth?

Posted: February 19, 2013 Filed under: NetApp, Storage | Tags: /etc/snapmirror.conf, destination filer does not match hostname ignoring line, filer, ignoring line, invalid destination, NetApp, snap mirror, snapmirror, snapmirror.conf 1 CommentI had the opportunity to configure SnapMirror for a client today and it gave me a bit of a headache. I did what I thought was my due diligence: reading the relevant vendor documentation for SnapMirorr for each version of Data ONTAP, 7.3.2 and 8.0.3P3. What I failed to do was read a few lines further than I actually did – I missed a simple piece of syntax that turned a 30 minute WebEx into a 2 hour ordeal. I learned a good lesson about SnapMirror during this engagement, though, and I’d like to share it.

The SnapMirror of these three volumes were actually for a data migration because the source filer is being decommissioned. The general steps required in the engagement today were as follows:

<> Run a cable between what will be the dedicated replication links on each filer

<> Configure each interface with IP settings

<> Ensure SnapMirror is licensed and enabled on each filer

<> Configure /etc/hosts and /etc/snapmirror.allow files on source filer

<> Configure /etc/hosts and /etc/snapmirror.conf files on destination filer

<> Initialize the baseline replicaction

My NCDA preparation experience

Posted: August 14, 2012 Filed under: Certifications, NetApp, Storage | Tags: data ontap, ncda, ncda resources, ncda study, NetApp, netapp certified, netapp certified data ontap, ns0-154 4 CommentsEdit: To jump to the good stuff, check out Neil Anderson’s free eBook, How to Build a NetApp ONTAP 9 Lab for Free!

I’d like to share quick note about my experience in studying for and taking the NetApp Certified Data Management Administrator exam for Data ONTAP 8.0 7-Mode, NS0-154. Perhaps someone out there will find the links and study methods here useful .

I’ve never held a pure Storage Administrator position, but I did recently complete a year-long contract implementing NetApp FAS3240 and FAS3270 filers as part of an Enterprise Virtualization Project for the US Army in Southwest Asia. I was actually hired as a Network Engineer to install, configure and migrate to Cisco Nexus 5020s and 2224 Fabric Extenders, but coming from a Systems background, I was able to perform the role of Implementation Engineer for the VMware, NetApp, and Nexus environments. It was a very satisfying role overall and one in which I gained a lot of varied experience.

Where am I? It’s dark and I’ve lost my network settings! How innocuous editing of NetApp config file can lead to lost IPs

Posted: February 24, 2012 Filed under: NetApp, Storage | Tags: /etc/rc, configure /etc/rc, configure netapp, configure netapp /etc/rc, edit /etc/rc, edit rc file, etc, etc rc, fas3270, filer lost ip settings, hosts file, ifconfig, ifgrp, interface group, keyboard layout, NetApp, netapp fas configuration, netapp lost ip address, rc, rc file, windows notepad 3 CommentsSo I was performing an initial configuration of a FAS3270 the other day when I changed the interface group information via PuTTY. Specifically, I deleted and recreated the interface groups manually instead of running setup. After I did this and following a reboot of the filer, the IP addresses for both interface groups were missing. Performing an ‘ifconfig -a’ before the reboot, I saw the IP addresses assigned correctly:

How to Configure LUN Masking with Openfiler 2.99 and ESXi 4.1

Posted: December 22, 2011 Filed under: Storage, VMware | Tags: configure lun masking, configure openfiler, esx, esxi, esxi 4.1, lun masking, openfiler, openfiler 2.99 8 CommentsThis is a duplicate post on this blog but for good reason. I’m back home for vacation and my on-ramp to the interwebs is finally high speed DSL and more reliable than when I first posted several months ago. Therefore, I’m able to include my original screenshots with this post. I had to remove the screenshots in the first post because they wouldn’t upload. I hope this post will give you that visual aid that’s so helpful in walkthroughs.

Binding iSCSI Port Names to VMware Software iSCSI Initiator – ESXi 4.1

Posted: October 3, 2011 Filed under: Networking, Storage, VMware | Tags: 4.1, bind, esxcli, iscsi, iscsi initiator, port groups, software iscsi initiator, vmware, vsphere Leave a commentFor my notes, I’m sharing what I’ve found searching the ‘net to bind VMkernel NICs to VMware’s built-in iSCSI software initiator in ESXi 4.1 I know ESXi 5.0 has changed this process to a nice GUI, but we’re stuck with the CLI in 4.1.

If you’re configuring jumbo frames as I’ve shown in a previous post, bind the VMkernel NICs after configuring jumbo frames.

Assuming you have two uplinks for iSCSI traffic, on the vSwitch of your iSCSI port group, set one uplink, temporarily, to unused. You’ll also want to note the vmhba# of the software iSCSI adapter. You can view this from the Configuration tab > Storage Adapters and viewing the iSCSI Software Adapter. You’ll also need to note the VMkernel NIC names of each iSCSI port group. You can view these from the Configuration tab > Networking and looking at the iSCSI port group. It will show the iSCSI port group name, the vmk#, IP address, and VLAN if you have one configured. Then from a CLI, either via the console or SSH, execute the following commands for each iSCSI port name:

Example: esxcli swiscsi nic add -n vmk# -d vmhba#

How to Configure LUN Masking with Openfiler 2.99 and ESXi 4.1

Posted: September 11, 2011 Filed under: Storage, VMware | Tags: esxi, lun masking, openfiler, vmware 13 CommentsNote: If you’d like to see screenshots for this article, check out this other post.

I’ve been building a test environment to play with vSphere 4.1 before we begin our implementation. In order to experiment with the enterprise features of vSphere I needed shared storage between my ESXi hosts. As always, I turned to Openfiler. Now, I’ve deployed Openfiler before, but it was just one ESXi host and a single LUN. It was easy. There were plenty of good walkthroughs on how to set this up in such a way. But using the Google-izer, I couldn’t find a single page that explained how to configure Openfiler for shared storage between multiple hosts. When I finally got it working, I felt accomplished and decided to document the process for future reference. Maybe someone out there will find it useful, too.

File System Alignment in Virtual Environments

Posted: June 24, 2011 Filed under: NetApp, Storage, VMware, Windows | Tags: alignment, file system, file system alignment in virtual environments, storage, vmware 1 CommentIn speaking to my fellow Implementation Engineers and team leads, I’ve come to learn file system misalignment is a known issue in virtual environments and can cause performance issues for virtual machines. A little research has provided an overview of the storage layers in a virtualized environment, details on the proper alignment of guest file systems, and a description of the performance impact misalignment can have on the virtual infrastructure. NetApp has produced a white paper that speaks to file system alignment in virtual environments: TR 3747, which I’ve reproduced below.

In any server virtualization environment using shared storage, there are different layers of storage involved for the VMs to access storage. There are different ways shared storage can be presented for the hypervisor and also the different layers of storage involved.

VMware vSphere 4 has four ways of using shared storage for deploying virtual machines: