Recent Cisco UCS Enhancements

Posted: June 17, 2015 Filed under: Cisco UCS | Tags: 2.2, 2.2(4), 2.2(5), cisco ucs, fabric interconnect traffic evacuation, per fabric interconnect chassis reacknowledgement, release notes, server packs, ucs, ucs server pack, ucs server packs Leave a comment Reading the release notes is always a good idea – but boring. But I perked up today when I was perusing the latest release notes for 2.2(5) for an upcoming implementation. Here are a few items I think are mentionable. Note that all these features were released in 2.2(4).

Reading the release notes is always a good idea – but boring. But I perked up today when I was perusing the latest release notes for 2.2(5) for an upcoming implementation. Here are a few items I think are mentionable. Note that all these features were released in 2.2(4).

Server Packs

Clearly I didn’t deploy any UCS’s around the time that 2.2(4) was released because I totally missed this feature. In the distant IT past (pre 2.1 days), both the Infrastructure and Server firmware had to be at the same level to stay in support. Then came 2.1 which introduced backward compatibility with older Server firmware. Now you’re not necessarily forced to upgrade your blade firmware at the same time as your Infrastructure firmware. Nice. Now with 2.2(4) and Server Packs, blade firmware is backward compatible with Infrastructure firmware. Even better. Of course, this comes with caveats. That being that this feature starts at 2.2(4). So at this point, only 2.2(4) and 2.2(5) support such a configuration. But this is cool. Below is the latest mixed firmware version table from the release notes.

Read the rest of this entry »

Options for Managing vSphere Replication Traffic on Cisco UCS

Posted: November 17, 2014 Filed under: Cisco UCS, SRM | Tags: cisco ucs, ucs, vr, vsphere replication Leave a commentI was recently designing a vSphere Replication and SRM solution for a client and I stated we would use static routes on the ESXi hosts. When asked why, I was able to 1. discuss why the default gateway on the management network wouldn’t work and 2. present some options as to how we could separate the vSphere Replication traffic in a way that would allow flexibility in throttling its bandwidth usage.

You won’t see listed here Network I/O Control because this particular client didn’t have Enterprise Plus licensing and therefore wasn’t using a vDS. In addition, this client was using a fibre channel SAN on top of Cisco UCS with only a single VIC in his blades. This configuration doesn’t work well with NIOC because it doesn’t take into account FC traffic which is sharing bandwidth with all the Ethernet traffic NIOC *is* managing.

Real World Cisco UCS Adapter Placement

Posted: October 29, 2014 Filed under: Cisco UCS | Tags: adapter placement, Cisco, ciscoucs, placement policies, placement policy, ucs, vic, vic1240, vic1280, vnic placement 5 CommentsI had the opportunity recently to deploy 18 B420 M3 blades across two sites. Having only deployed half width blades over the last two years, I had to change my usual Service Profile configuration for ESXi hosts to ensure the vNICs and vHBAs were properly spread across the two VICs installed in each blade. Each B420 had a VIC 1240 and a VIC1280. The Service Profile for the blades includes six vNICs and two vHBAs. The six vNICs were used to take advantage of QoS policies at the UCS-level. The six vNICs configured included:

- vNIC FabA-mgmt

- vNIC FabA-vMotion

- vNIC FabA-VM-Traffic

- vNIC FabB-mgmt

- vNIC FabB-vMotion

- vNIC FabB-VM-Traffic

Cisco Nexus Fibre Channel configuration template

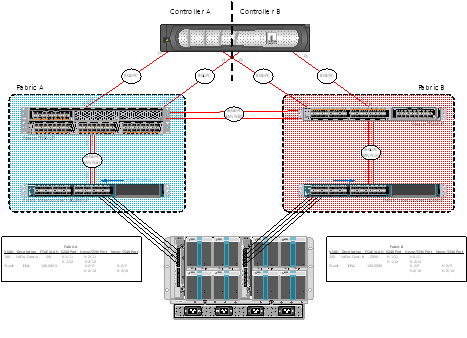

Posted: May 23, 2013 Filed under: Cisco Nexus, Networking, Storage | Tags: 5548up, 5596up, cisco nexus, cisco ucs, device-alias, eisl, e_port, fc, fc config, fc configuration, fc end host mode, fc switch mode, fc ucs, fibre channel, fibre channel configuration template, fibre channel template, f_port, isl, n_port, te_port, tf_port, ucs, vsan, zone, zoneset, zoning 6 CommentsI recently had the opportunity to configure native fibre channel in my test lab at work using Nexus 55xx series switches and Cisco’s UCS. What I’ll do in this post is to share my templatized fibre channel configuration in a somewhat ordered way, at least from the Nexus point of view. This test lab was configured with the attitude that it should show off the capabilities of the hardware and software. Concepts included in this initial configuration include NPIV, NPV, SAN port-channels, F_Port trunking, VSANs, device aliases, and of course, standard FC concepts like zones and zonesets.

Let me first share the end-state as of today, what I’ll call Phase I. I’ll explain what my initial plan was for Phase I and, after learning a bit more, what I plan to do for Phase II. Please feel free to correct me in the comments below – I made a lot of mistakes configuring this and I wouldn’t be surprised if there’re a few more in there.